Safety Theories and Models for Managing Risk in Adventure Tourism Programs

It was the second day of a four-day ice-climbing course based out of the town of Field, British Columbia, in Yoho National Park in the Canadian Rockies.

Two guides and four guests were climbing at Massey’s, a popular icefall climbing route on Mount Stephen, walking distance from Field. The group had spent the morning engaged in a multi-pitch climb of the route.

In the afternoon, a clinic on building ice-climbing anchors was held at the base of the frozen waterfall. Unknown to the climbers, about a kilometer above them, a layer of snow detached from the rest of the snowpack. It picked up mass as it descended the mountain. With little to no warning, the avalanche hit the group. Two participants were caught in the avalanche and swept more than 70 meters from the base of the climb.

One climber, who was only partially buried, was able to be extricated without serious physical injury. The other was uncovered from a depth of approximately 1.8 meters, about 30 minutes after the avalanche. Unresponsive and not breathing, she was flown by helicopter to an ambulance and transported to a medical facility, where she was pronounced dead.

This is the kind of incident that adventure tourism operators hope will never come to pass: unexpected; impossible to anticipate with certainty; and catastrophic in its impact. How could this have happened? Could this have somehow been prevented, or is an example of the inherent risk of outdoor adventure?

The trip organizer was an experienced and well-respected mountain guide—certified as a full Mountain Guide with the Association of Canadian Mountain Guides since 2008, guiding ice climbing in the Canadian Rockies every winter since 2005, an ACMG instructor and examiner, and with an extensive resume of ice climbs around the world.

The guide on site at Massey’s the day of the incident likewise was impressively qualified—an IFMGA Mountain Guide since 2016, with decades of ice climbing experience; a graduate of the Canadian Avalanche Association’s Avalanche Operations Level 2 course, and well-experienced with guiding at Massey’s.

Massey’s was a well-known ice climbing site. Guides had evaluated snow conditions, the weather forecast, and Parks Canada’s daily avalanche ratings. Participants were briefed, and each brought their avalanche probe, shovel, and beacon.

And yet, on March 11, 2019, an avalanche cascaded down on unsuspecting climbers, carrying one—a devoted lover of outdoor adventures, accomplished medical professional, beloved friend and wife—to her tragic and untimely death.

We know that safety incidents will occur in outdoor adventures. What we can’t predict with certainty, however, is what kind of incident will occur, or when, or where, or who will be involved.

It’s this unpredictability that poses a challenge to leaders of adventure tourism and related wilderness, experiential, travel and outdoor programs. How do we anticipate the unexpected? How do we guard against unforeseen breakdowns in our safety system—full of policies, procedures, and documentation designed to prevent mishaps from occurring?

Adventure tourism operators aren’t the only organizations to struggle with preventing safety incidents. Airlines strive to avoid plane crashes. Nuclear power plant operators work to prevent meltdowns. Hospitals seek to eliminate wrong-limb surgical amputations.

Aviation, power generation, healthcare and other large industries have invested heavily in researching why incidents occur—and by extension, how they can be prevented. They have funded research scientists to conduct investigations, develop theories of incident causation, and establish models that represent those incident causation theories. There are academic journals, conferences, and an ever-growing literature in the field of risk management.

Outdoor adventure programs can learn from the work that springs from these investments in advancing safety science. Just as the highest-quality backcountry travel programs pay attention to the best thinking in skills training, equipment management, and guiding practices, adventure tourism programs can benefit greatly from keeping abreast of the best thinking in safety science across industries, and applying cutting-edge risk management theories and models to help outdoor recreation and outdoor education participants have extraordinary outdoor adventures with good safety outcomes.

Let’s take a look at safety thinking, and the risk management theories and models that have evolved over time. We’ll explore how safety science has advanced over the last 100 years. And we’ll examine how the most current thinking in risk management—revolving around the idea of complex sociotechnical systems—can be applied to improve safety outcomes at outdoor adventure programs.

The field of risk management includes career specialists in safety science, a wide variety of theories and models, numerous academic journals, and PhD programs in risk management. From this, best practices have evolved that can be applied across industries—from aviation to alpine mountaineering.

The Evolution of Safety Thinking: Four Ages

Let’s begin by briefly considering the trajectory of safety science from the Industrial Revolution to the present day.

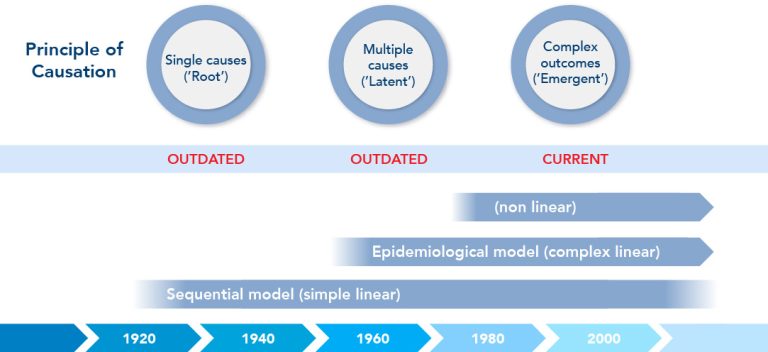

The evolution of safety thinking can be broken down into several eras, each representing a distinct approach to understanding why incidents occur, and how they might be prevented. The model below illustrates four separate eras of safety thinking:

- The Age of Technology,

- The Age of Human Factors,

- The Age of Safety Management, and

- The Age of Systems Thinking.

The Age of Technology

In this model, adapted from Waterson et al., we see the 1800’s version of safety thinking as a mechanistic model. The predominant understanding of incident causation was a linear one—the “domino model”—where incidents were seen as resulting from a chain of events.

This linear chain-of-causation thinking is exemplified in the following 13th century nursery rhyme:

For want of a nail the horseshoe was lost.

For want of a horseshoe the horse was lost.

For want of a horse the rider was lost.

For want of a rider the battle was lost.

For want of a battle the kingdom was lost.

Root Cause Analysis was a core element of safety thinking at this time: if one could only identify the originating cause of the problem (want of a nail, in the example above), then the incident (loss of a kingdom) could be prevented.

The Age of Human Factors

If we fast-forward to a time 50 years ago, we see that human behavior—and specifically, human error—is seen as a major cause of incidents. If we can control people’s actions, why, then we can prevent incidents from occurring!

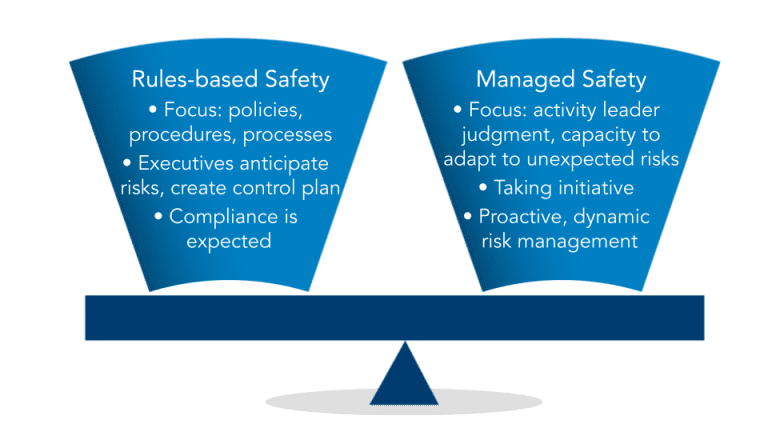

This “Age of Human Factors” brings detailed policy registers, procedures handbooks, operating manuals, and rulebooks of every sort. Control human behavior—the most significant, yet most unpredictable, element of any safety system—and you control risk. This marks the advent of rules-based safety.

It’s important to note that each step in the history of safety thinking represents a cumulative advance of wisdom regarding how to prevent incidents. The older theories and models are not to be discarded; they are to be built upon. As safety thinking advanced from a mechanistic search for incident causes through Root Cause Analysis, it’s important to recall that Root Cause Analysis can still be useful—but, crucially, more sophisticated and effective tools have been added to the safety manager’s toolkit.

The Age of Safety Management

It didn’t take long, however, for management to recognize the fact that—surprise!—people don’t always follow the rules. And, rules cannot be invented to address every conceivable situation, every possible permutation of circumstances where risk factors appear.

We then see, in more or less the 1980’s, the evolution of a recognition that the use of procedures and inflexible rules has to be balanced with allowing people to use their good judgement, and to adapt dynamically to a constantly changing risk environment.

This is the birth of “Integrated Safety Culture”—combining rules-based safety, which provides useful guidance to support wise decision-making in times of stress—with the flexibility for individuals to make their own decisions, even if that means not following the documented procedures or the pre-existing plan.

The Age of Systems Thinking

Nuclear power plants are big, complicated things. They have lots of mechanical components, and are operated and maintained by large teams of personnel. Although much attention is put towards their safe operation, dangerous meltdowns continue to occur—the Three Mile Island reactor partial meltdown in 1979, the Chernobyl disaster in 1986, and the Fukushima Daiichi nuclear disaster in 2011.

It became clear that despite detailed engineering systems, extensive personnel training and oversight, and many other safety measures, managers seemed simply unable to understand and control the enormous complexity of a nuclear generating station. The system was too complex. The safety models that were in place to prevent meltdowns simply weren’t 100 percent effective. A new, more sophisticated model of incident causation, that could account for the complex mix of people and technology, was needed.

This led to the development of complex sociotechnical systems theory.

Complex sociotechnical systems theory combines a recognition of the profound complexity of “systems”—whether they be a nuclear power plant or a heli-skiing outfit. It attempts to understand how people and their behavior influence safety, and how technology—from pressure release valves in a reactor, to high-altitude medical protocols on an alpine trek—influence safety outcomes. And it seeks to understand the interaction of people—the “socio-”—with the technologies and items they interact with—the “technical”—within a system that also has outside influences and is constantly in flux.

Systems thinking—the application of complex sociotechnical systems theory—represents the most current and most advanced approach to risk management. It is, however, more abstract and challenging to understand than simpler, albeit less effective models. It’s therefore important to invest in understanding what complex STS theory means, and how it can be applied to the adventure tourism setting.

One of the principal ideas of systems thinking is the recognition that we cannot have full awareness of, let alone control of, the complex system of an airplane, a hospital operating room, or a backcountry ski touring expedition. We therefore need to build in extra safeguards and capacities so that when an inevitable breakdown in our safety system occurs, the system is resilient enough to withstand that breakdown without catastrophic failure.

This has been termed “resilience engineering,” and is a fundamental approach to applying systems thinking to safety in the travel and experiential education contexts. We’ll further examine the resilience engineering concept, as it applies to adventure tourism safety, shortly.

The Evolution of Safety Thinking: Incident Causation

Let’s continue exploring how ideas of risk management have evolved over the decades. But this time we’ll look at the ways in which thinking around how incidents occur has become more sophisticated, and an increasingly accurate representation of the factors that lead towards a mishap’s occurrence.

The Single-Cause Incident Concept: A Simple Linear Model

The idea of what causes an incident—on an outdoor adventure, or anywhere—was in the past considered to be due to a single causal element. The boots fit poorly, and thus caused the blister. The blister popped, which caused the infection. The infection got worse, so the trekker ended up in the hospital. The root cause: ill-fitting boots. The sequence: a linear one, from root cause leading to an unanticipated mishap, leading to an injury or other loss.

In the image below, from the Safety Institute of Australia, building off the work of Hollnagel, we see this illustrated as the “single cause” principle of causation, which is part of a simple linear model of how incidents occur. The chain of causation is a simple linear sequence.

This idea gained popularity in 1931, when Herbert Heinrich published the first edition of his influential book, Industrial Accident Prevention.

Heinrich used a sequence of falling dominos in his text to show how an accident came about:

Simply eliminate one step in the chain, and voila! No accident.

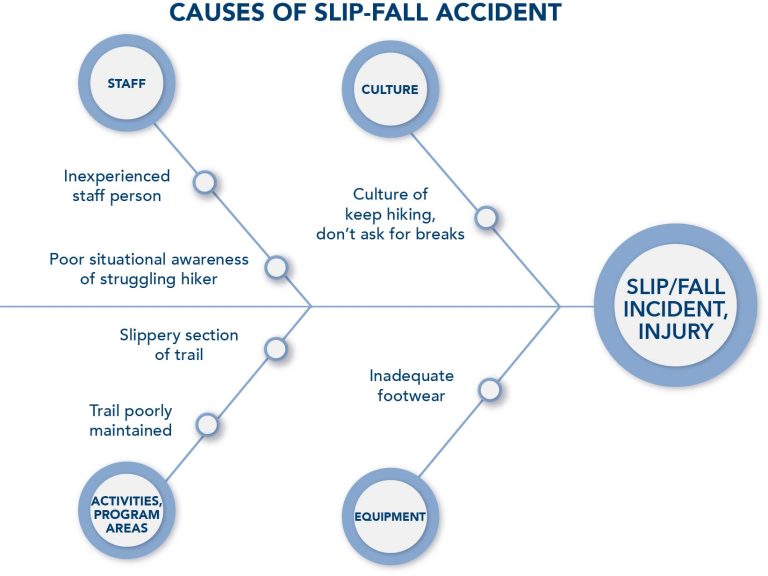

Another simplistic, linear-style model is the Fault Tree Analysis. The Fishbone Diagram is one example.

Here we see all the factors that came together to lead to an adventure tourist slipping and falling on a trail. The hiking guide was naïve and inattentive; the culture on the trip was “shut up and keep hiking;” the trail was slick and ill-maintained, and the client’s sneakers provided insufficient traction.

The Multiple-Causes Incident Concept: A Complex Linear Model

Later, it became increasingly clear that multiple factors were involved in causing an incident. An event occurred—a person went on a hike wearing too-small boots. But that doesn’t necessarily lead to an infected blister. Perhaps the guide asks hikers to check for hot spots. Or the company instructs participants to break in their boots before commencing their trip, during which time the poor fit could be discovered and rectified.

But if the guides are not well-trained and proactive about safety, and if the adventure travel operator does not provide a detailed gear list with instructions well in advance of the outdoor experience, these “latent conditions” can combine with the event—the inadequate footwear—to cause an incident.

This is the “epidemiological” model. It features one or more events, plus one or more latent conditions. The “epidemiological” term references disease transmission modeling, where, for example, a person ventures into the forest in search of wild game (the event), and encounters an animal such as a bat or civet cat that harbors a pathogen (the disease reservoir). The person then comes back into a populated area, leading to an outbreak or epidemic of disease.

This incident model is still a relatively simplistic, linear model, but it also was one of the first to represent incidents as happening within a system of elements.

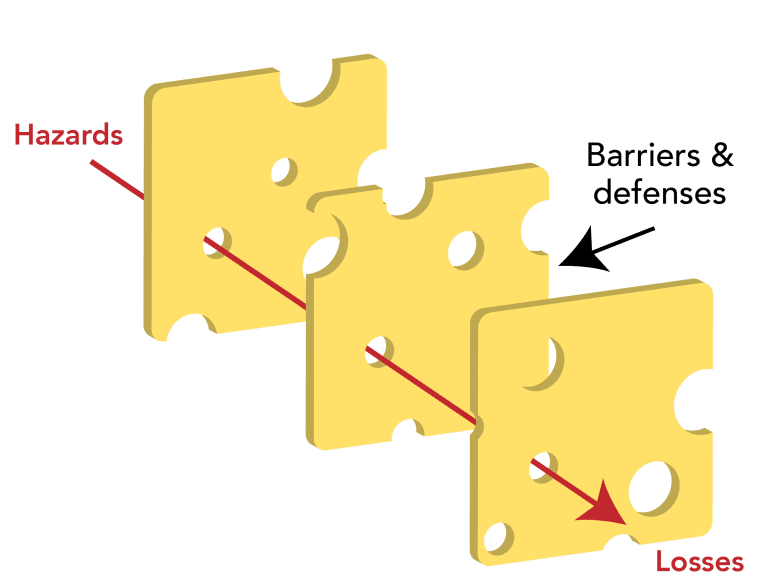

The epidemiological model gained prominence in 1990, after James Reason published a paper on the topic in the Philosophical Transactions of the Royal Society.

Reason described risk management systems as a series of barriers and defenses. If a hazard were able to get past each of the barriers and defenses by finding a way through the holes in those obstacles, then an incident would occur. Only when all the conditions lined up right would the hazard successfully pass the obstacles and cause an incident.

This model, while being superseded by complex systems models that more accurately represent incident causation, uses evocative symbolism and is still in the public consciousness, being cited in the New York Times in August 2021 on COVID-19 safety.

Incident Causation as Taking Place within a Complex System

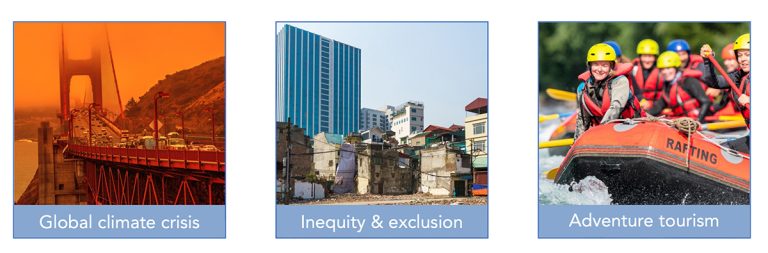

Finally, risk management theoreticians arrived at what represents the current best thinking in incident causation: the complex systems model.

Here, a complex and ever-changing array of social and technological factors interact in impossible-to-predict ways, leading to an incident. This is the idea of complex sociotechnical systems, as applied to risk management.

Examples of complex systems include the global climate crisis; issues of diversity, equity, and inclusion; and adventure tourism programs.

Complex systems are characterized by:

- Difficulty in achieving widely shared recognition that a problem even exists, and agreeing on a shared definition of the problem

- Difficulty identifying all the specific factors that influence the problem

- Limited or no influence or control over some causal elements of the problem

- Uncertainty about the impacts of specific interventions

- Incomplete information about the causes of the problem and the effectiveness of potential solutions

- A constantly shifting landscape where the nature of the problem itself and potential solutions are always changing

This model is the most accurate we have to date. However, it’s also the most difficult to conceptualize and work with.

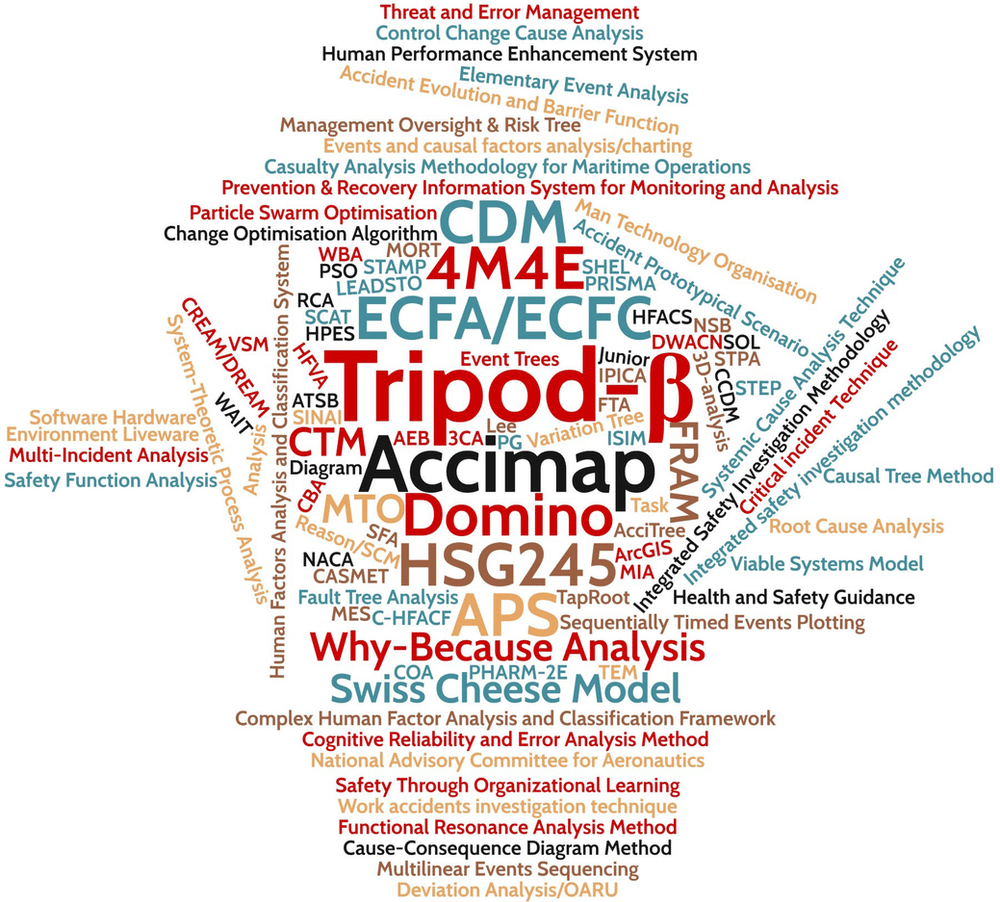

A variety of terms have been used by safety specialists to describe complex STS theory applied to risk management: Safety Differently, Safety-II, Resilience Engineering, Guided Adaptability, and High Reliability Organizations, among others.

A panoply of terms has been employed in efforts to impose order and structure on the idea of complex systems:

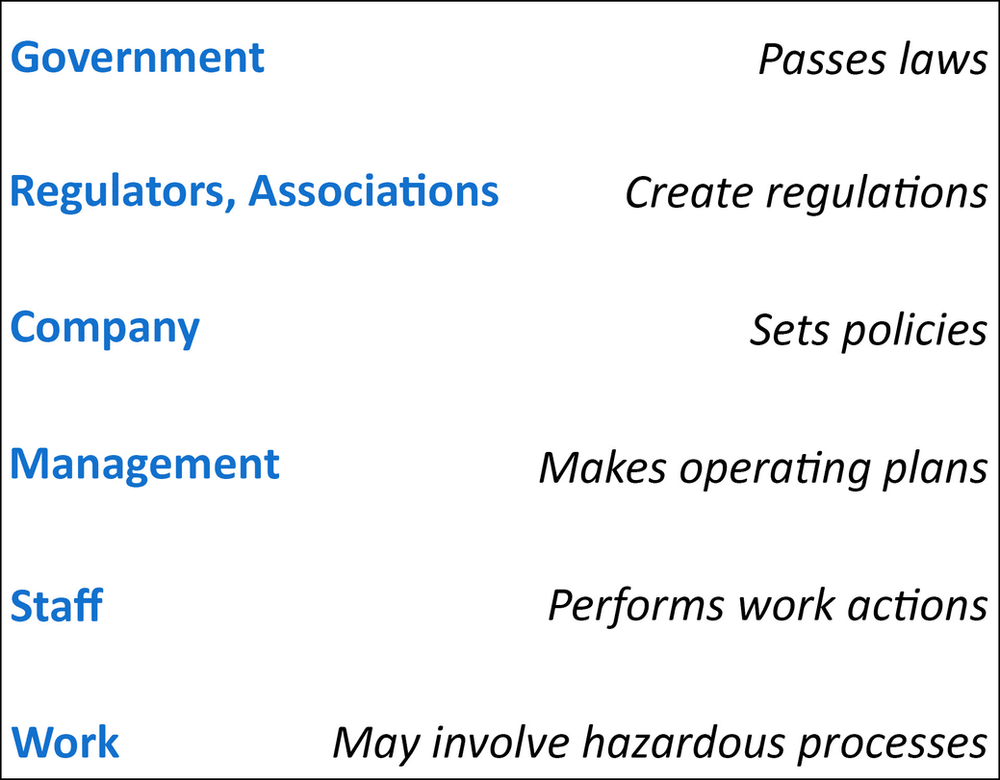

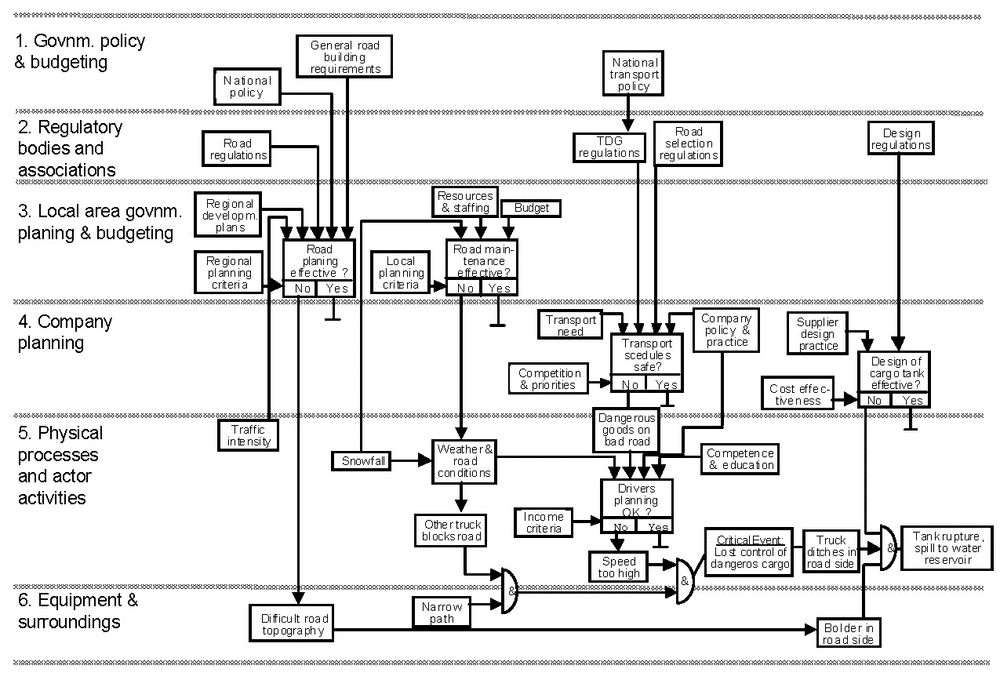

Perhaps the best-known model, however, is the “AcciMap” approach, developed by the Danish professor Jens Rasmussen, whose pioneering work in nuclear safety has been adapted for experiential/adventure travel and other contexts.

Rasmussen saw different levels at which safety could be influenced:

- Government, which can pass and enforce safety laws;

- Regulators and industry associations, such as the Association of Canadian Mountain Guides and Canadian Avalanche Association, which can establish detailed standards;

- Organizations, like individual adventure tourism operators, which can establish sound operating policies to manage risk;

- Managers, such as adventure tourism company directors, who can develop work plans that incorporate good safety planning;

- Line staff, for example wilderness guides, who perform day-to-day activities with prudence and due care, and

- Work tasks, such as running a rock climbing site, which have been designed to have minimal inherent risks.

Rasmussen gave the example of a motor vehicle accident in which a tanker truck rolled, spilling its contents and polluting a water supply. The analysis identified causal factors at all levels–government, regulators/associations, the transportation company, personnel, and work tasks–that contributed to the incident.

But AcciMap, and the AcciMap variants that have evolved over the years, are far from the only models which seek to represent complex sociotechnical systems theory applied to risk management.

For instance, the Functional Resonance Analysis Method models complex socio-technical systems in an intricate web of interconnecting influences. Primarily used in large industrial applications, it’s less likely to be useful for safety management in the wilderness travel or outdoor adventure context.

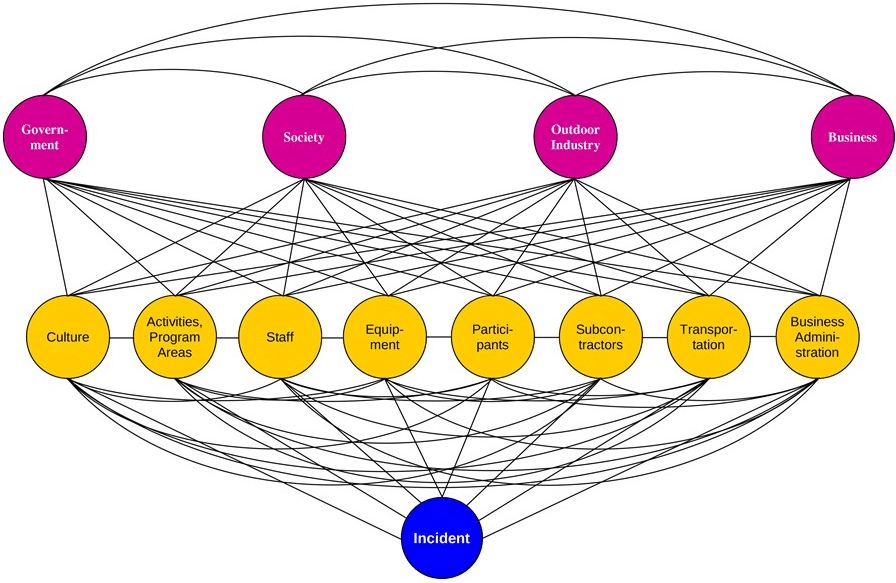

The Risk Domains Model

A model exists, however, that adapts the complex sociotechnical systems elements of AcciMap and similar frameworks, and applies them to the contexts of adventure tourism and related experiential, wilderness, outdoor and travel programs.

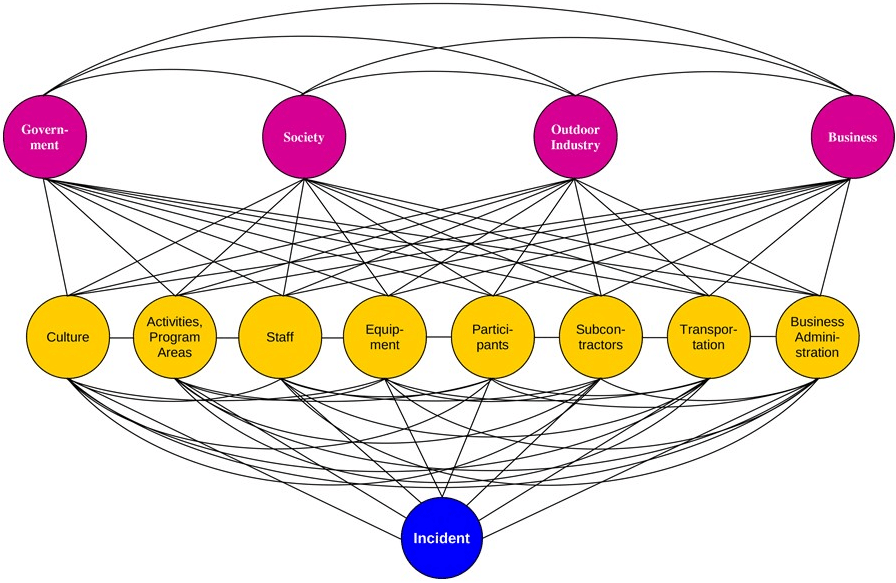

This is the Risk Domains model, pictured below.

Here we can see eight “direct risk domains:”

- Safety culture

- Activities & program areas

- Staff

- Equipment

- Participants

- Subcontractors (vendors/providers)

- Transportation

- Business administration

Each of these areas holds certain risks. For example, a mountain site in winter may have avalanche risk. The participant domain brings risks of clients who, for instance, who are poorly trained in safety practices, fail to follow safety directions, or who are medically unsuitable for an activity. Subcontractors may cut safety corners.

In addition, there are four “underlying risk domains:”

- Government

- Society

- Outdoor Industry

- Business

Here, we see that sound government regulation can support good safety outcomes; a society that values safety and human life encourages good safety practices; industry associations like the Adventure Tour Operators Association of India and the Adventure Travel Trade Association can provide important support for good risk management, and large corporations that feel a civic responsibility will not impede the government’s capacity to enforce sensible safety regulation.

Risks in any of these domains can combine to directly or indirectly lead to an incident, as we see illustrated in the web of interconnections between each risk domain and an ultimate incident.

Managing risks within the context of the Risk Domains model has two components.

First, in each risk domain, risks are identified that may apply to an organization.

For example, an adventure tourism company may recognize that it must intentionally develop a positive safety culture each season with its new-to-the-organization guides, so that staff do not get carried away with their enthusiasm for adventure and put themselves or their clients unnecessarily at risk.

And adventure travel operators may need to invest in business administration-related protections to secure medical form confidentiality, protect against embezzlement or other theft, and guard against ransomware and other IT risks.

Policies, procedures, values and systems should be instituted to bring the risks that have been identified in each risk domain as potentially present, down to a socially acceptable level.

Policies might include, for example, a rule that safety briefings are held before each activity, or that incident reports are generated after all non-trivial incidents.

Procedures might include how and when to scout rapids on a whitewater paddling expedition, or belaying practices while climbing with clients.

Values might include, for instance, the value that safety is important, and should be taken seriously.

And systems might include medical screening, guide training, or a system for assessing suitability of subcontractors (providers).

The idea is not to bring risks to zero—that would paralyze any outdoor adventure experience—but to bring them to a level where, if an incident occurs, then stakeholders (such as next of kin, incident survivors, newsmedia, and regulators) understand that reasonable precautions were taken against reasonably foreseeable harms, even though an incident did occur, as is inevitably the case from time to time.

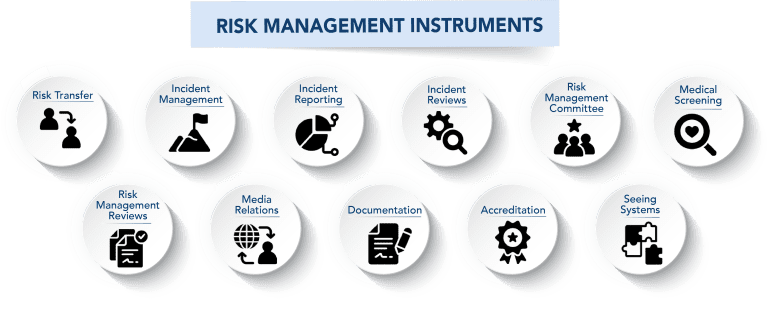

Risk Management Instruments

In addition to instituting specific policies, procedures, values and systems to maintain identified risks in all relevant risk domains at a socially acceptable level, there are broad-based tools, or instruments, that can be applied to manage risks across multiple or all risk domains at the same time.

This is the second component in the Risk Domains model for managing risks.

These risk management instruments are:

- Risk Transfer

- Incident Management

- Incident Reporting

- Incident Reviews

- Risk Management Committee

- Medical Screening

- Risk Management Reviews

- Media Relations

- Documentation

- Accreditation

- Seeing Systems

Risk Transfer refers to the presence of insurance policies, subcontractors who assume risk, and risk transfer documents like liability waivers.

Incident Management refers to having a documented and practiced plan for responding to emergencies.

Incident Reporting means documenting safety incidents and their potential causes, analyzing incidents individually and in the aggregate, and then developing and disseminating responses (in the form of revised training materials, safety reports, new policies, etc.) to respond to the incidents, and the trends and patterns they illuminate.

Incident Reviews means having a process for the formal review of major incidents, by internal or external review teams.

Risk Management Committee indicates a group of individuals, including those from outside the organization, who have relevant subject matter expertise, and who can provide resources and unbiased guidance.

Medical Screening refers to structures to ensure that participants and staff are medically well-matched to their circumstances.

Risk Management Reviews are formal, periodic analyses of the organization’s safety practices.

Media Relations refers to staff who have the training and materials to work effectively with newsmedia in the case of a newsworthy safety incident.

Documentation refers to written or other guidance that records what should be done (e.g. in the form of field staff handbooks or employee manuals), and what has been done (e.g. incident reports, SOAP notes, check-offs, and training sign-in sheets).

Accreditation refers to recognition by an authoritative body, such as the Association for Experiential Education, that widely accepted industry standards have been met.

Seeing Systems refers to employing complex sociotechnical systems theory in the design and implementation of adventure tourism safety practices.

Together, the application of policies, procedures, values and systems to manage identified risks, along with the use of broadly effective risk management instruments to address risks across many risk domains, can help an outdoor adventure program maintain risks not to exceed a socially acceptable level.

Sidebar: Limitations of Risk Assessments

At this point, we’ve explored some of the history about safety thinking, and a progression of models that attempt to represent why incidents occur, and by extension, how they might be prevented.

We’ve focused on the Risk Domains model, which is a relatively easy-to-use framework designed explicitly for outdoor adventure and related organizations.

We talked about how one aspect of the Risk Domains model is, within each risk domain, identifying specific risks that an organization may face, and instituting policies, procedures, values and systems to manage those risks such that they do not to exceed a socially acceptable level.

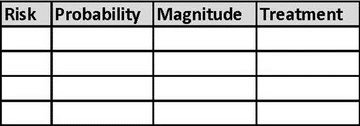

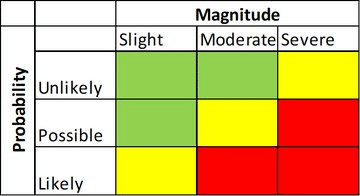

This involves performing a risk assessment: identifying risks, classifying them by probability and severity, and then establishing appropriate risk mitigation measures.

This is known as a Probabilistic Risk Assessment, or PRA.

With PRAs, a spreadsheet lists risks, and the probability and severity of each:

The risks least likely to be encountered, and with the mildest consequences (in green, below), are likely to be accepted.

The risks most likely to be experienced, and which may have significant negative impacts (in red), are likely to be eliminated, or significantly reduced.

We see this model in the international standard for risk management, ISO 31000. Here, risks are identified, classified, and treated.

Let’s take a moment to look more closely at this PRA process.

While risk assessments are very common across many industries—and, on some level, people perform risk assessments constantly, in their daily life—they do have limitations.

The core limitation is that risk assessments are a relatively simplistic approach to understanding and mitigating potential risks. This means that they are relatively ineffective, unless coupled with more advanced approaches for managing risk—specifically, those approaches informed by complex sociotechnical systems theory.

PRAs typically assess only direct, immediate risks from specific activities, locations or populations, such as weather, traffic hazards, and equipment failure.

They typically fail to account for underlying risk factors such as poor safety culture, financial pressures, deficits in training and documentation, or lack of regulatory oversight.

They also typically fail to account for human factors in error causation: cognitive biases and cognitive shortcuts (heuristics).

Finally, they typically fail to consider systems effects: how multiple risks interact in complex and unpredictable ways that lead to incidents.

Simply put, reliance on PRAs as a principal risk management tool does not correlate with what research in complex socio-technical systems and human factors in error causation tell us about how incidents occur. They are therefore ineffective as a comprehensive risk management tool or a stand-alone indicator of good risk management.

Outdoor safety researchers Clare Dallat et al. note that the research suggests “…current risk assessment practice is not consistent with contemporary models of accident causation.”

This is not a problem for organizations that couple risk assessments with other components of an overall safety system. But for organizations that have a culture which places risk assessments as the leading tool for managing risk, there is a failure to use the best and most current thinking around incident prevention.

Applying Contemporary Safety Science to Adventure Tourism Operations

There are three specific areas we’ll look at as we consider how to take what we’ve discussed so far about safety science, and apply it to the world of adventure tourism organizations and related travel, wilderness, outdoor education/recreation and experiential programs.

These are:

- Risk Assessments,

- Safety Culture, and

- Systems Thinking.

Safety Science Applied to Adventure Tourism: Risk Assessments

We now recognize that risk assessments have an important role to play in identifying and mitigating relatively obvious and front-line risks, as long as PRAs are not seen as the predominant method for managing risk.

An adventure tourism operator that appreciates the value of examining straightforward and relatively predictable risks, but which also recognizes that additional steps must be taken to guard against incidents that spring from unanticipated combinations of risk factors, balances appropriate use of risk assessments with systems-informed safety practice.

Safety Science Applied to Adventure Tourism: Safety Culture

Culture, as you recall, is one of the areas in which risks reside, according to the Risk Domains model described above. But what do we mean by culture? And how does culture relate to safety?

We can define culture as an integrated pattern of individual and organizational behavior, based on shared beliefs and values.

Behavior, then, springs from beliefs and values. Actions are visible; yet, the beliefs and values from which they come are not.

What, then, do we mean by safety culture?

We can define safety culture as the influence of organizational culture on safety.

More specifically, we can understand safety culture as the values, beliefs, and behaviors that affect the extent to which safety is emphasized over competing goals.

This raises the questions: is our safety culture okay? How do we evaluate our safety culture?

We can assess the safety culture of an organization through seven dimensions:

- Leadership from the top

- Inclusion

- Suffusion

- Culture of Questioning

- Collaboration

- Effective Communication

- Just Culture

Survey instruments exist to help individuals evaluate the quality of their organization’s safety culture. Participants in the 40-hour online training, Risk Management for Outdoor Programs, for example, complete a detailed organizational self-assessment that helps them rate the culture of safety in their workplace.

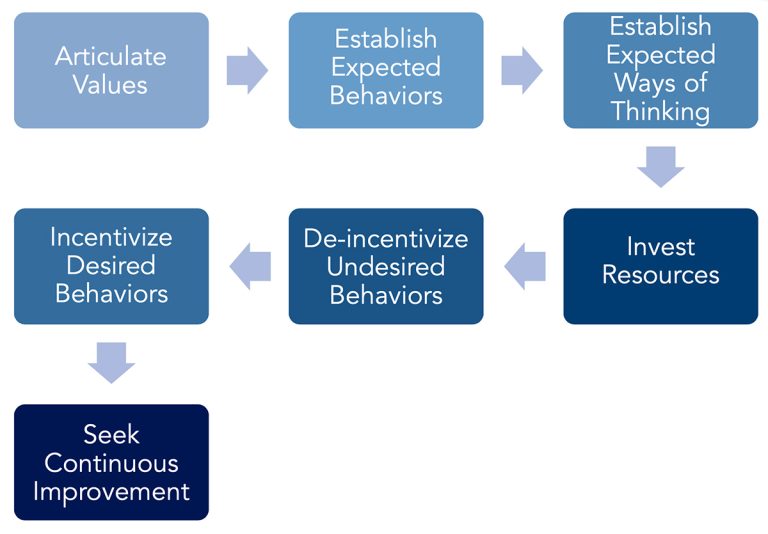

An evaluation might identify opportunities for improvement in safety culture. How does an organization—of any size or shape—go about shifting something as abstract as its safety culture, the values and beliefs of its employees, volunteers, customers, and other stakeholders (such as company owners or clients)?

Shifting culture is a change management process. It’s the same general change management process for making any kind of change within any group or team, regardless of the topic or trajectory.

Changing an institutional culture is not easy. It may be helpful, however, to follow an established change management process, as below:

Here, top leadership repeatedly states the importance of safety. What that looks like is made clear, both in day-to-day actions as well as in the use of systems thinking.

Time, money, and political capital are needed to build momentum for change. Appropriate actions should be encouraged, and undesirable ones disincentivized. Finally, a management system to continually evaluate and improve change efforts should be implemented.

Just Culture

One way that outdoor adventure programs can exhibit a positive safety culture can be found in how management responds when an incident occurs.

It can be tempting to, by default, blame the person closest to the incident for causing the problem.

The guide driving to the trailhead drove around the corner too fast, and skidded off the road, damaging the vehicle. You were told to drive carefully, so this is your fault!

However, this doesn’t account for the fact that management packed the guide’s schedule so tightly that people were in a rush. And the company owner repeatedly drove too fast, including when guides were present. So, who is really to blame?

When in incident occurs, it’s useful to look at the underlying factors that led to the mishap. To avoid unfairly blaming people, and to most effectively identify and address the elements that actually fostered the incident, it’s important to address the causal elements throughout the entire system.

When we focus on what went wrong, rather than who “caused” the problem, we’re practicing Just Culture.

Just Culture empowers people to report incidents, since they won’t fear getting into trouble, and it helps the organization address the actual underlying safety issues that helped bring about the incident.

Safety Science Applied to Adventure Tourism: Systems Thinking

The final area we’ll focus on where contemporary risk management theory and modelling can be applied to outdoor adventure programs is in specific applications of systems thinking.

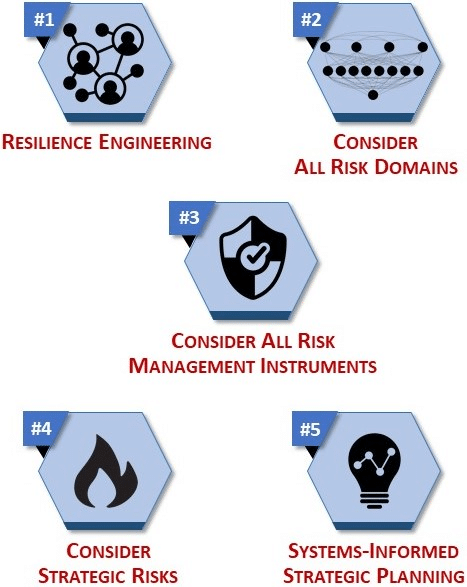

There are five principal approaches we’ll consider:

- Resilience engineering

- Considering all risk domains

- Considering all risk management instruments

- Considering strategic risks

- Employing systems-informed strategic planning

We’ll address each, one by one.

#1: Resilience Engineering

The concept of resilience engineering stems from a central idea about complex systems: there will be breakdowns in the system—some time, somewhere—but we don’t know when, or how, or what risk domains will be involved. We can’t create rules to address every potential problem. And we don’t know how to stop humans from committing errors that lead to incidents.

Therefore, we need to build into the system a capacity to withstand unanticipated breakdowns, from wherever and whenever they occur, without the system falling apart.

This is the crux of resilience engineering.

There are four principal approaches that adventure tourism companies can take to apply principles of resilience engineering to their programs:

- Build In Extra Capacity

- Build In Redundancy

- Employ an Integrated Safety Culture

- Foster Psychological Resilience

Extra Capacity

Extra capacity means having reserves of staff, equipment, transportation options, and so on, so that operations can continue on more or less normally during times of significantly increased demand or reduced supply.

For an outdoor adventure program, this may mean having a staffing structure with standby employees who are ready to step in if one or more persons are unable to perform their duties, for example due to possible COVID-19 exposure.

It may also mean having backup equipment available, in case items are lost, stolen, fall into the crevasse, or the like.

And it means having staff trained to be able to perform at a level higher than what would normally be anticipated. For instance, guides leading a rafting trip in class III water should be comfortable paddling in class IV water. This way, if there is an emergency, personnel are able to effect a rescue without exceeding the level of their own abilities.

Redundancy

Commercial airplanes have multiple flight computers and multiple pilots, so if one stops functioning normally, another is available. This illustrates the principle of redundancy.

Outdoor adventure programs, too, are wise to judiciously use redundancy to build a resilient safety system.

For example, an organization may have multiple ways to identify emerging safety issues: incident report forms, a written report from guides at expedition’s end, feedback systematically gathered from clients, periodic safety audits by a third party, and so on.

Wilderness expeditions should have multiple guides per group, so if one leader is incapacitated, the other can perform first aid, rescue, evacuation or other functions. Both should be trained in first aid—and for remote expeditions, clients should be trained in basic first and CPR as well, if guides are simultaneously injured.

Multiple telecom devices should be available to communicate in an emergency. If the radios aren’t working, a cell or satellite phone will come in handy.

And, as a final example, multiple evacuation options should be available from a wilderness destination, so if one trail is too close to the rapidly expanding wildfire, an alternate route is pre-identified.

Integrated Safety Culture

Integrated safety culture, as we discussed above, means balancing rules-based safety with allowing personnel to use their judgment.

Psychological Resilience

When a crisis occurs, individuals may rise to the occasion, drawing on previously unknown wells of inner strength, grit, and perseverance.

In other cases, during an emergency, individuals may freeze, flee, or quit.

Outdoor adventure companies that find ways to recruit, hire, train and retain staff who have a positive attitude towards challenge can position themselves so that when a major stress occurs to the organization’s safety system, staff dig in and work hard to resolve the problem, even in the face of great challenge and uncertainty.

#2: Consider all Risk Domains

When an adventure tourism operator seeks to build a risk management system that can withstand stressors but still perform, it’s useful to look at all the regions from which risks—and by extension, system breakdown—can emerge. The eight direct risk domains and four underlying risk domains are illustrated below.

When a program is considering opening up a new activity (for example, a climbing-focused company expanding into river rafting trips), a new location (say, the first trips to Costa Rica or Sri Lanka), or a new population (e.g. older persons on luxury international excursions), it’s apt to ensure whether all parts of the organization are fully prepared.

Does the marketing team have accurate promotional materials? Are liability waivers updated to allow for informed consent to new risks? Are staff training checkoffs updated for the new location? Do logistics staff have all the equipment ready to go?

In addition, all domains should be considered when conducting incident reviews and risk management reviews (safety audits). And when incident reports are evaluated, and recommendations made for safety improvements based on evaluation results, all domains should be considered when creating those recommendations.

#3: Consider All Risk Management Instruments

While a small, startup adventure tourism operator may not employ each of these eleven risk management instruments, due to capacity constraints, most larger outdoor adventure trip providers would be well-served to employ each one. This will add layers that strengthen the organization’s capacity to prevent incidents from occurring, and mitigate their impacts should a major mishap occur.

#4: Consider Strategic Risks

Strategic risks are those that pose a long-term threat to an experiential adventure program’s viability.

Demographic, market and social shifts may slowly reduce program attendance and financial sustainability over time. A trend away from outdoor recreation towards electronic entertainment, for instance, may over time degrade viability of adventure tourism organizations.

Likewise, political and geopolitical concerns can influence the viability of an outdoor adventure program. Politically-driven pandemic mismanagement can restrict tourist travel; authoritarianism and civil unrest can make international travel destinations unattractive.

Finally, the global climate crisis is an exemplar of a strategic risk affecting adventure tourism. Outdoor adventure programs have been harmed by the climate emergency in myriad ways: from wildfires and smoke closing recreation spaces, to increased risk from flooding, and much more. This is widely anticipated to get worse for decades.

Although smaller programs likely don’t have much capacity to engage in a detailed review of these strategic risks, all outdoor adventure organizations are wise to pay attention to long-term threats to their viability, as resources permit.

#5: Systems-Informed Strategic Planning

The fifth and final application of systems thinking to adventure tourism safety is in systems-informed strategic planning around risk management and outdoor travel.

We often tend to hear what we want to hear (confirmation bias). And we sometimes unconsciously avoid asking ourselves about difficult issues with no easy resolution, such as contemplating shutting down a beloved program due to increasing safety risks.

There are a variety of ways in which organizations can approach an issue—such as safety—in ways that help teams think lucidly and creatively about the issue, unhindered by bias or inaccurate assumptions (heuristics).

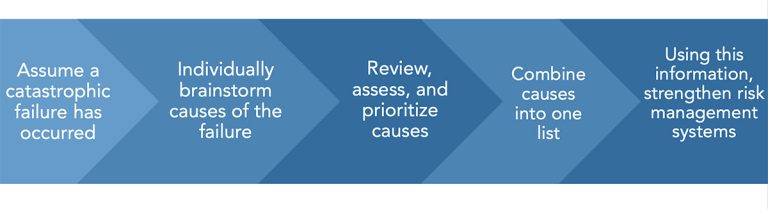

One of these that has been used successfully in the outdoor adventure program context is the process of visualizing a hypothetical catastrophe that has occurred at the program. Individuals then brainstorm ideas about why this critical incident occurred. Recommendations are generated, and can be put into place—before any catastrophe actually occurs.

This method of visualizing a fictional catastrophe and identifying preventive measures is known as a “pre-mortem.”

With one outdoor adventure organization, following the death by lightning of a trip leader, the CEO gathered staff together and asked, “Who is the next person who is going to die? How will they be killed?”

The staff group, which had representatives from all levels of the organization, from entry-level to executive, was able to bring up a number of potential safety issues that had never been raised before, as there had never been a suitable forum in which to discuss them.

Conclusion and Further Resources

What happened following the tragic death of a climber in the avalanche incident in the Canadian Rockies?

In additional to the devastating impact on the person who died, and her family and loved ones, the incident deeply affected survivors and the Canadian mountain guiding industry—and continues to do so.

So many things were done right in the days and moments leading up to the avalanche. No clear and widely accepted conclusions have been established as to how the incident could definitively have been prevented. Further investigation or thought, however, may lead to additional clarity.

A report on the incident published by the ACMG recommended making additional time for companion rescue training prior to climbing in avalanche terrain.

The report also identified the value in increased information-sharing about hazards specific to particular ice climbs, and an effort involving Avalanche Canada to that end is underway.

A variety of technical and non-technical concerns were also raised by a survivor of the avalanche.

The complexity of dealing with the psychological needs of survivors became clear post-incident, as did the need for additional resources for small adventure tourism operators to be better able to address this complex set of needs. While this cannot prevent a future avalanche incident, the ACMG has invested in developing and disseminating long-term accident response resources, which can reduce the immense psychological toll often experienced by survivors and those involved in a critical incident. Other changes at the ACMG are ongoing. A support network, Mountain Muskox, was also established to provide skilled psychological support for those involved in critical incidents in the mountains.

In Summary

Many outdoor adventure programs have an enviable safety record, stretching back for years. But a positive history is not a guarantee of future success.

As the field of safety science matures, advances in risk management theories and modelling are made. These new resources can and should be employed by adventure tourism operators, to the extent possible.

We’ve seen how safety science has evolved over the last 100 years from simplistic linear models of incident causation, to seeing incidents as springing unpredictably out of a complex system involving people and technology—complex sociotechnical systems.

We’ve looked at a variety of models that attempt to illuminate this theory, AcciMap being a leading framework. The Risk Domains model provides a systems-based representation of how incidents occur, customized for adventure tourism organizations and similar outdoor and experiential programs.

And we’ve identified several ways that adventure tourism organizations can apply the best current thinking in risk management to practical ways for improving safety.

These include:

- Using risk assessments in their proper role, without over-relying on them

- Building and sustaining a positive culture of safety

- Incorporating systems thinking into adventure tourism program safety by:

- Applying resilience engineering ideas, such as extra capacity, redundancy, integrated safety culture and psychological resilience, to outdoor adventure programming;

- Considering all risk domains when managing risks;

- Considering the use of all applicable risk management instruments;

- Considering strategic risks, such as demographic shifts, political concerns and climate change, and

- Using systems-informed strategic planning to generate creative safety solutions.

For More Information

Other opportunities exist for continued learning about how adventure tourism enterprises can protect their participants, their staff, their organization and the community at large.

More information about the systems thinking ideas here can be found in the textbook Risk Management for Outdoor Programs: A Guide to Safety in Outdoor Education, Recreation and Adventure.

A 40-hour online course, delivered over one month, provides an opportunity to explore these topics in greater depth, and to develop a systems-informed safety improvement plan customized for one’s own program. This class, Risk Management for Outdoor Programs, delivers a thorough and detailed training in best practices in risk management for adventure tourism organizations and related travel, experiential and outdoor programs.

Adventure tourism operators can offer thrilling and fulfilling experiences that provide the memories of a lifetime. The value these experiences bring to satisfied and delighted clients are clear. And so too is our responsibility to keep abreast of advances in risk management that can help adventure tourism programs provide exciting and successful outdoor experiences with excellence in quality and risk management.